AI-Consumable Services — When AI Becomes Your Customer

January 28, 2026

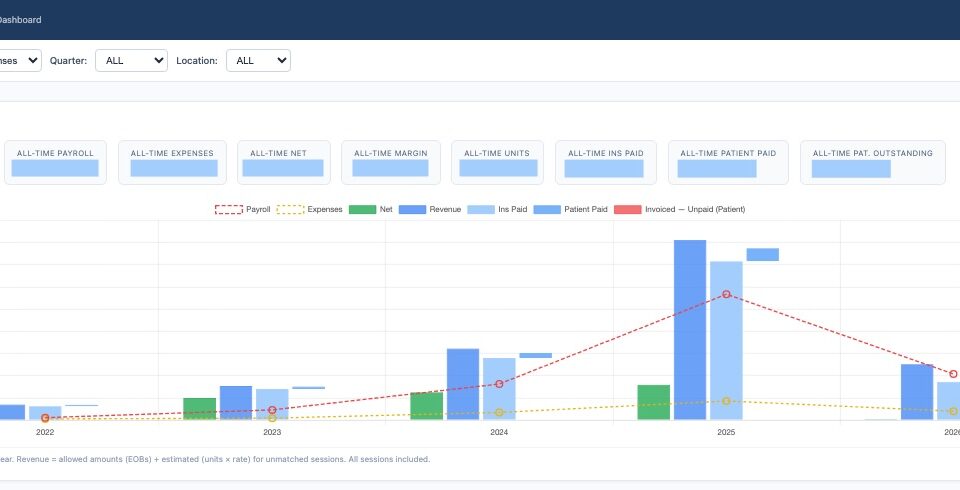

Using Claude Code to Make Sense of Small Business Data

April 25, 2026

I’ve interviewed hundreds of product managers, designers, and AI practitioners over the years. One pattern shows up consistently. Our hiring processes look polished and structured, but they often miss what actually matters once the person is on the job.

We tend to optimize for signals that are easy to evaluate. Strong resumes. Crisp answers to product scenarios. Familiarity with tools and frameworks. These are not useless, but they are incomplete. They create a sense of confidence in the interview, but that confidence does not always hold up in real work environments.

The gap exists because real product work is messy. It involves judgment, not just knowledge. People are making decisions with incomplete information, navigating tradeoffs, and influencing others without formal authority. None of this shows up cleanly in a 45-minute interview loop, no matter how well designed it is.

So the question becomes, what should we actually look for?

The first is how candidates think. Can they take a vague, poorly defined customer problem and break it down into something actionable? Can they make a decision when the data is incomplete or conflicting? This is where many candidates struggle, even if they interview well. Articles like How to hire a Product Manager Retro by Ken Norton do a good job explaining why structured thinking and judgment matter far more than textbook answers.

The second is how they operate. Can they move from ambiguity to action without waiting for perfect clarity? Can they work across functions and deal with real constraints like timelines, dependencies, and limited resources? In practice, this is what determines whether someone can actually ship. Frameworks can help, but execution in messy environments is what differentiates strong operators. There are some useful perspectives from Shane Allen on hiring product designers, especially around evaluating how candidates have worked in real team settings.

The third is how they create impact. This is the most important and the hardest to evaluate. It is easy to talk about activity and outputs. It is much harder to understand whether someone’s work actually moved a meaningful metric or changed a business outcome. For product managers, this means going deep into decisions and tradeoffs. For designers, it means understanding how customer thinking translated into real user outcomes. Books like The Product Manager Interview by Lewis C. Lin touch on this, but in practice, it requires much deeper probing than standard interview questions.

There are two practical ways to evaluate all of this. One is to go deep into a candidate’s past work and really understand what they did, why they did it, and what changed because of it. The other is to bring them into situations that are close to your actual business. Not polished case studies, but realistic and imperfect scenarios. When you do this, you start to see how they think and operate under conditions that resemble real work.

One simple guideline has worked well for me across roles. Put candidates in situations that are slightly uncomfortable and incomplete. Give them just enough context to start, but not enough to feel fully prepared. Real work rarely comes with perfect clarity, and this approach surfaces how they handle ambiguity far better than rehearsed answers.

All of this becomes even more important in an AI-driven world. It is getting easier to acquire surface-level skills. Tools can help people produce decent outputs quickly. But fundamentals like judgment, problem framing, and the ability to translate ideas into real business impact are becoming more valuable, not less. The best product, design, and AI talent will be the ones who can adapt as the playbook evolves, while still delivering consistent outcomes.

Hiring, at its core, is not about interview performance. It is about predicting job performance. The closer your process gets to real work, the better your decisions will be.